Help with running a sequential model across multiple GPUs, in order to make use of more GPU memory - PyTorch Forums

Imbalanced GPU memory with DDP, single machine multiple GPUs · Discussion #6568 · PyTorchLightning/pytorch-lightning · GitHub

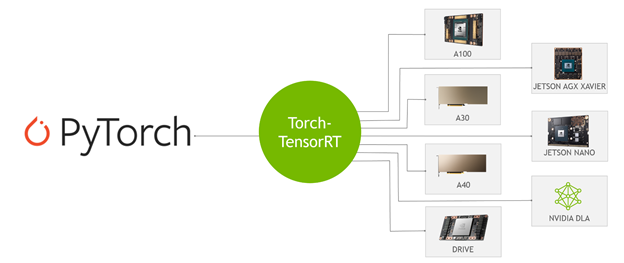

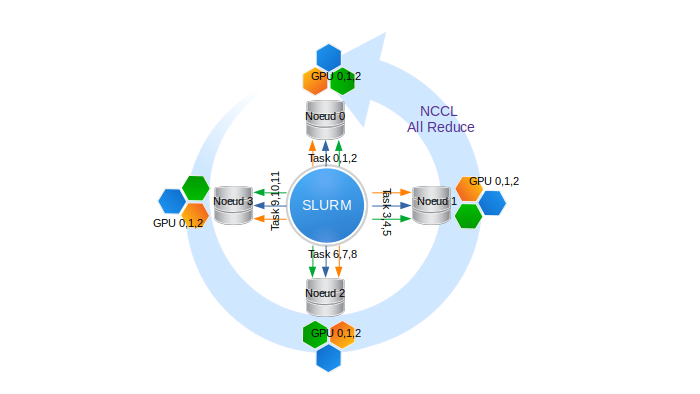

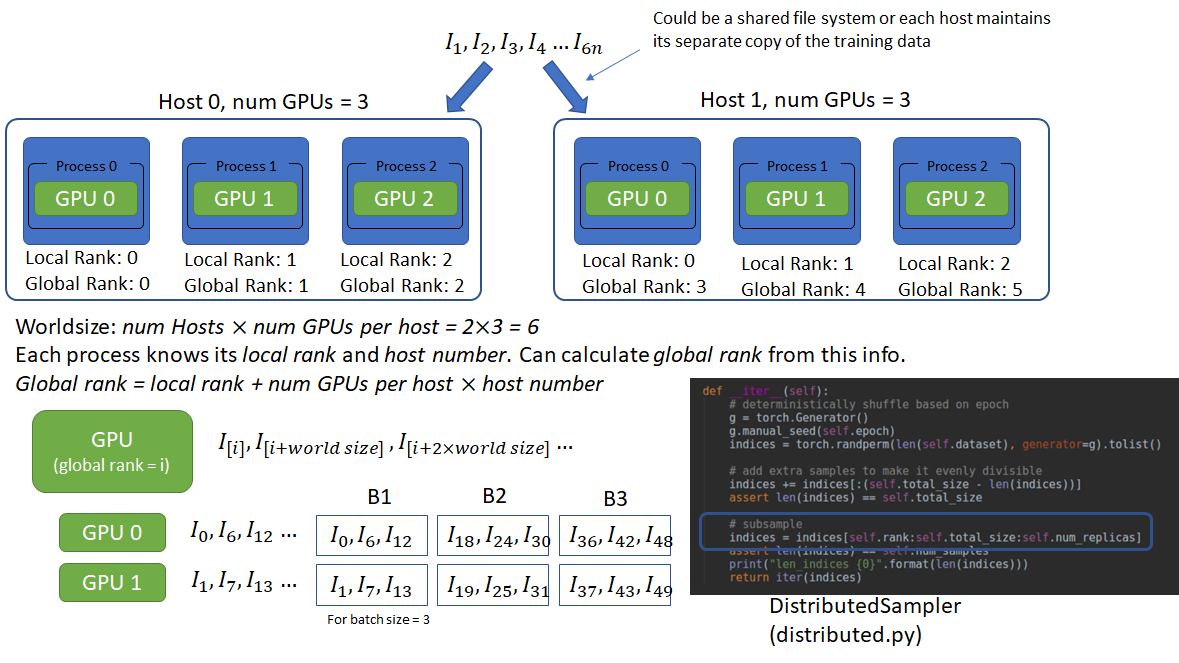

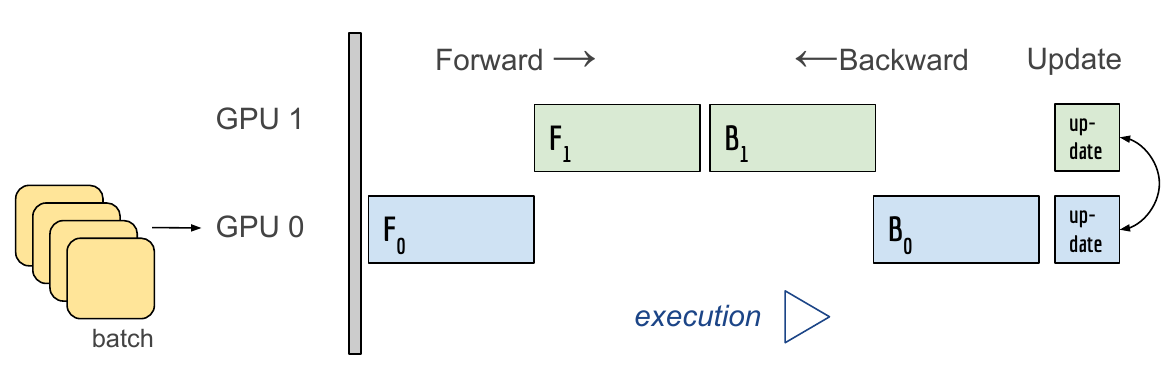

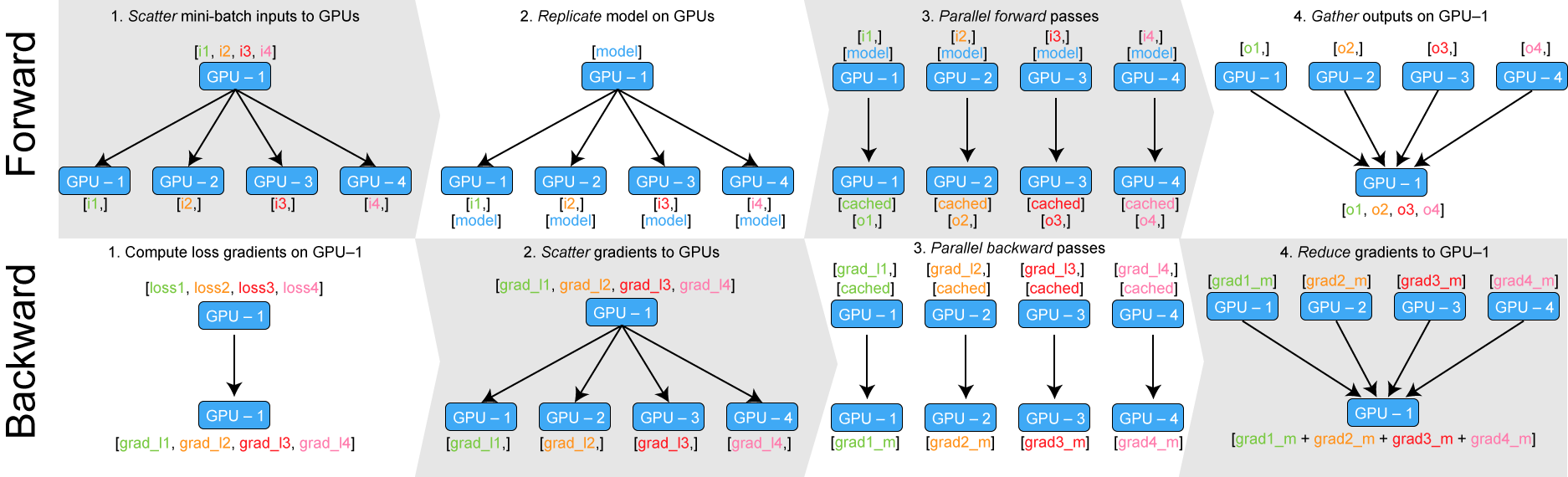

How distributed training works in Pytorch: distributed data-parallel and mixed-precision training | AI Summer

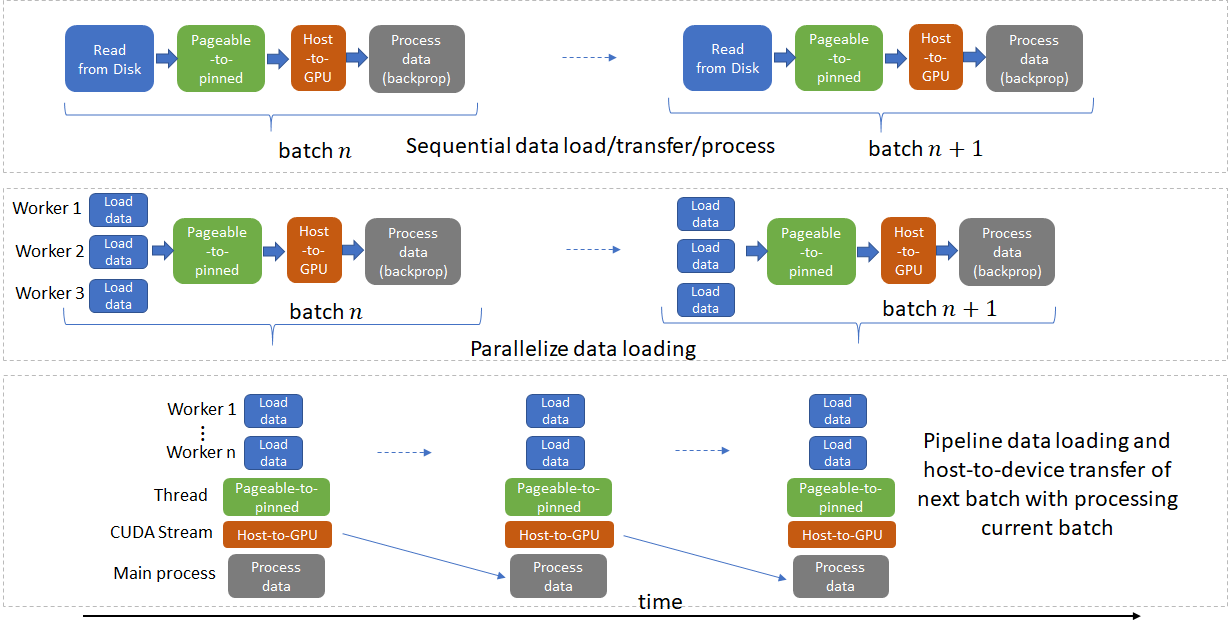

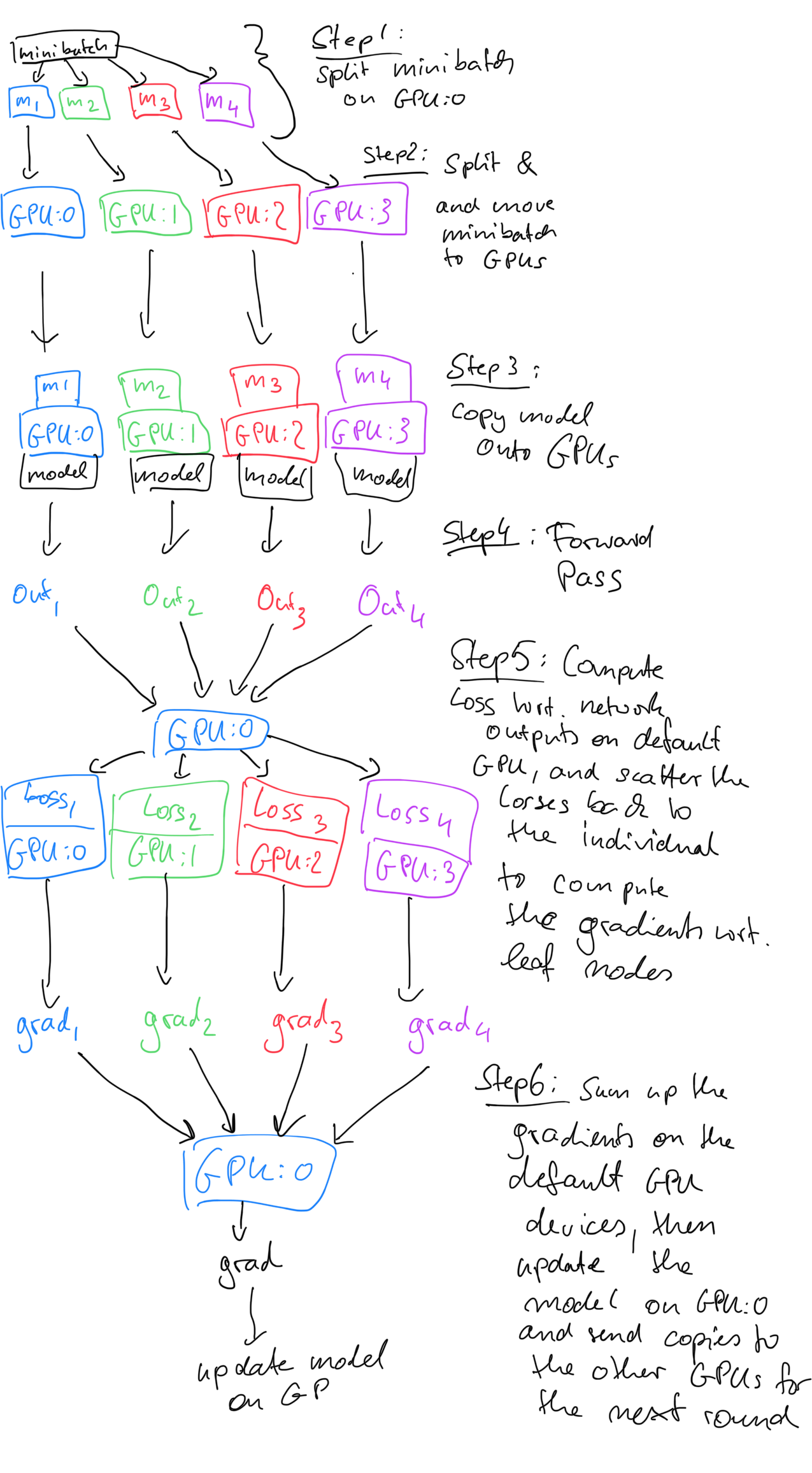

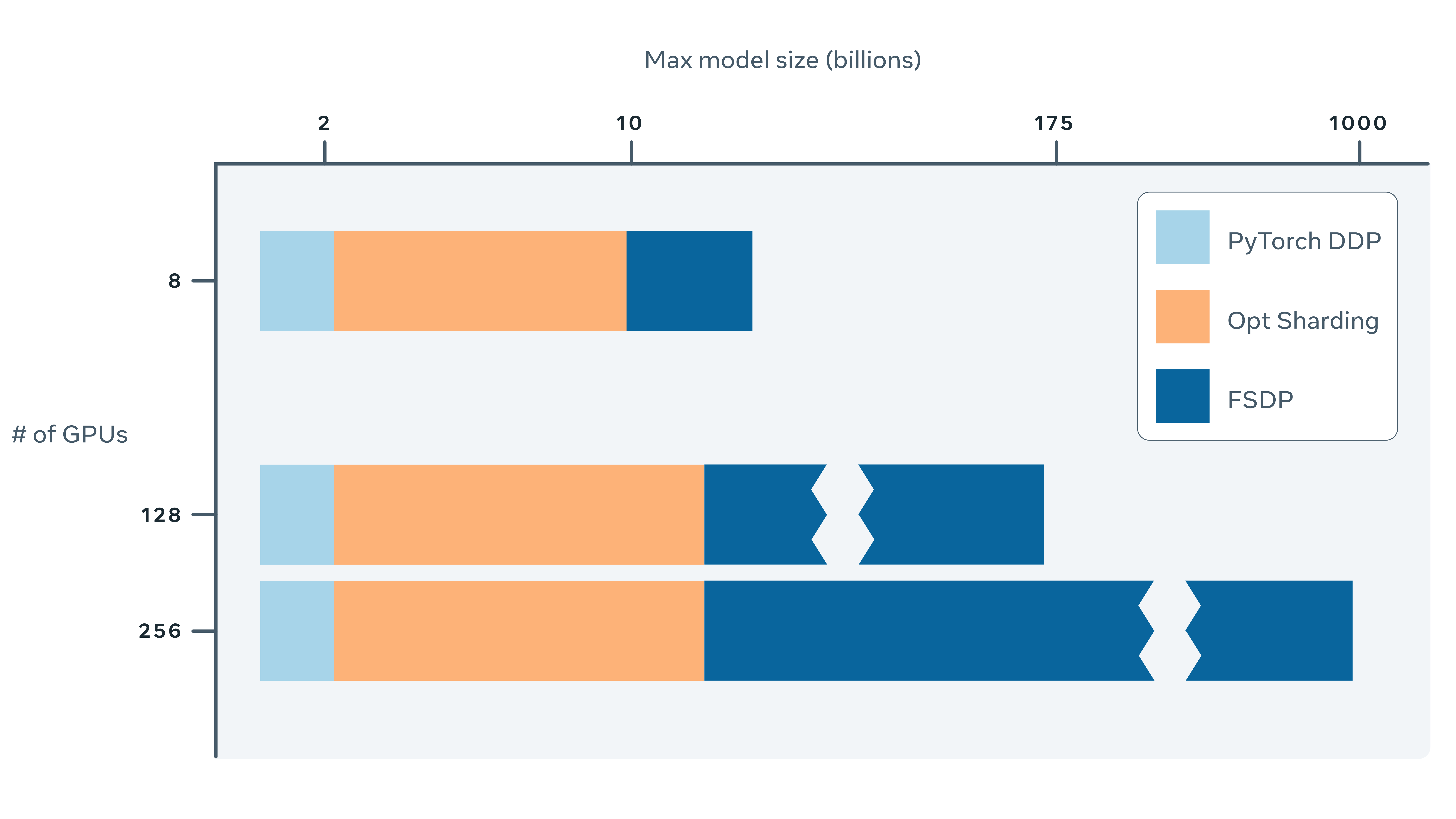

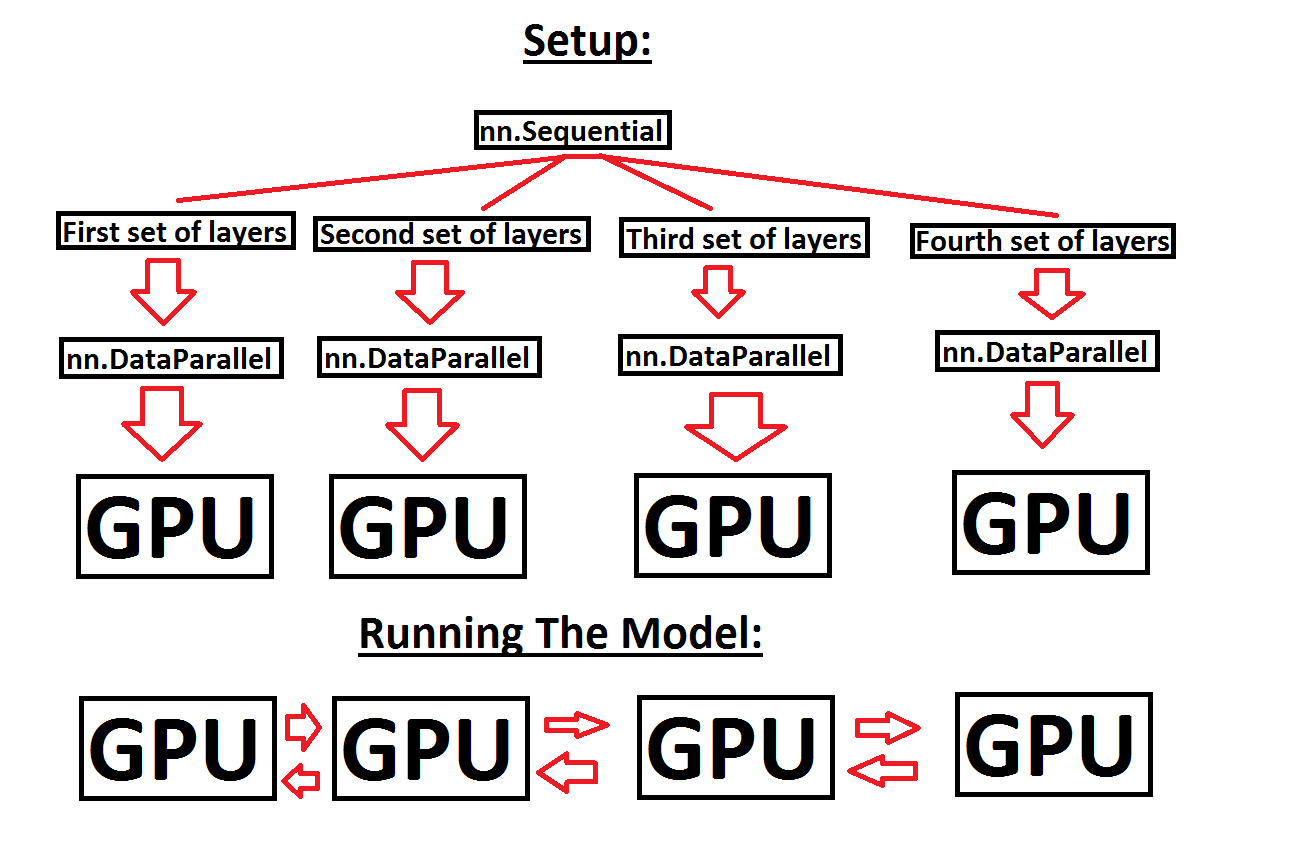

Learn PyTorch Multi-GPU properly. I'm Matthew, a carrot market machine… | by The Black Knight | Medium

Learn PyTorch Multi-GPU properly. I'm Matthew, a carrot market machine… | by The Black Knight | Medium